With cloud computing, many fear losing control. True, supply chains may be complex: services may be layered; separation of ownership, control and use is common not only for services but also software and hardware. However, control in the cloud isn’t ‘one size fits all’. Cloud computing doesn’t necessarily entail giving up all control. Adopting that well-worn phrase much hated by lawyers’ clients, ‘It all depends’. Cloud users and providers may have differing degrees of control,[1] eg IaaS users can exercise ‘self-help’ control over security by installing firewalls, enforcing encryption or tokenisation, updating operating systems and software, etc.

So, users can retain control in cloud computing – depending. But, given the increasing virtualisation and, more significantly, abstraction of computing resources that cloud computing enables logical control trumps physical control, whether in terms of computing, storage or networking,. Physical control is a subset of logical control. In particular, I argue that logical control over access to data matters most.

The Data Export Restriction

Consider the data export restriction (the ‘Restriction’) under Articles 25-26 of the Data Protection Directive (DPD)[2]. This bans any ‘transfer to a third country’ (outside the EEA) of personal data, unless:

· a derogation applies;

· ‘adequate protection’ is provided under certain mechanisms, eg the Commission’s ‘whitelist’ of countries, or the beleaguered US Safe Harbor scheme; or

· the transfer is authorised under certain ‘adequate safeguards’, eg Commission-approved model clauses or regulator-approved binding corporate rules.

The legislative purpose of the Restriction was anti-circumvention: to stop controllers from avoiding being subject to substantive DPD principles (‘Principles’[3]), such as purpose limitation, simply by moving personal data to a third country.[4] It is a meta-restriction, designed to ensure application of the Principles. The 1990 proposal’s Article 4(1)(a) required Member States to apply EU data protection laws to ‘all files located in its territory’, ie basing jurisdiction on the physical location of ‘data files’.[5] Against that background, the Restriction made sense: prevent controllers from avoiding application of data protection laws by transferring their ‘files’ outside the EU.[6]

But the 1992 amended proposal[7] recognised that the DPD should reflect the difficulty or impossibility of determining physical location of electronic data or processing, and the possibility of multiple locations or distributed processing in a networked world.[8] Accordingly, along with a change from regulating ‘files’ to regulating ‘processing’ of ‘personal data’, the criterion for jurisdiction was amended from location of ‘file’ to the controller’s EU ‘establishment’, or EU-located ‘means’ for controllers not ‘established’ in the EU. However – crucially – the Restriction was neither deleted nor consequentially amended, although logically the difficulty of physically locating data would affect it equally. Furthermore, from a jurisdictional viewpoint, when the 1992 proposal’s changes subjected EU-established controllers to the Principles regardless of where the personal data processed were ‘located’, arguably it should no longer have been necessary to restrict such locations because, being clearly subject to EEA jurisdiction, they must process personal data in accordance with the Principles wherever the processing infrastructure is located geographically. Therefore, logically there ceased to be good reason on anti-avoidance grounds to restrict such locations.

Take web sites.[9] If EEA controllers publish personal data on web sites they must still comply with the Principles. If it is not fair and lawful to make certain personal data available publicly on a web site, then it shouldn’t be published, regardless of provider or server location or the Restriction. If it is fair and lawful and other Principles are satisfied, it should be publishable, again whether or not that would breach the Restriction. I submit that that is the correct approach, entirely consistent with the Restriction’s legislative objective: to prevent avoidance of (and thereby ensure compliance with) the Principles. In this light, the Restriction seems unnecessary.

Problems with Transfers and ‘Third Country’

The fact that that ‘transfer to a third country’ is undefined poses another problem. With personal data stored on media such as the floppy disks prevalent in the 1990s, physically transporting such media to a third country would clearly involve a ‘transfer’. But what does ‘transfer’ mean in the age of the Internet?[10] In Lindqvist,[11] a Swedish church volunteer uploaded personal data to the server of a provider hosting her personal web site, making the data publicly accessible to anyone visiting the web site. The European Court of Justice decided there was no ‘transfer’ by her when uploading to an EEA-established provider, regardless of the location of the server or any visitors. This reflected a pragmatic approach; the ECJ noted that the Restriction established a special regime but, if interpreted such that a ‘transfer’ occurred with every webpage upload of personal data, it would apply generally and prevent any uploading of personal data on the Internet if even one third country was found inadequate.[12] The European Data Protection Supervisor[13] considers Lindqvist means that the Restriction doesn’t apply to public web sites, but applies only to sites where transfers are intended to specific third countries (such as an intranet with known users/locations).[14] To the UK Information Commissioner, a ‘transfer’ occurs upon access by third-country visitors if the uploader intends (or is presumed to intend) to give such visitors access. Similarly with the Netherlands’ submission to the Lindqvist court. Accordingly, I suggest intention to enable those subject to a third country’s jurisdiction to access personal data seems a better test than ‘transfer’.

Lindqvist might suggest that no ‘transfer’ should occur if EEA controllers use EEA-established cloud providers to process personal data, irrespective of server locations.[15] Indeed, the Lindqvist basis seems stronger where cloud users intend personal data to be processed only for their own use (eg storage) but don’t intend wider access, unlike with Lindqvist’s web site visitors. However, data protection authorities consistently interpret ‘transfer’ as ‘movement of personal data’s physical location to a third country’. Yet arguably the true concern should not be physical data location but transfer of (intelligible) personal data to a recipient subject to third country jurisdiction. In SWIFT,[16] EU DPAs collectively, in the form of the Article 29 Working Party, opined that there was a ‘transfer’ when Belgian financial transactions messaging processor SWIFT mirrored, ie copied, personal data to its US data centre. However, the real mischief was not physical location of data in the US per se – it was SWIFT’s disclosures of personal data to US authorities under subpoenas, arising from the US location of its data centre giving US authorities effective jurisdiction under US laws to compel SWIFT’s disclosures.[17]

Similarly, in Odense, a Danish municipality wished to use Google Apps SaaS. The Danish DPA considered that there might be impermissible ‘transfers’ because personal data could be processed in data centres in ‘Europe’ outside the EEA, ie third countries.[18] However such transfers were allowable if model clauses were entered into ‘with’ the third-country data centres. But a data centre is not a legal entity that can sign a contract. Contracts may only be made with whoever owns/operates the data centre: here, Google Inc. Transfers to Google’s US-located data centres were permissible because it participates in Safe Harbor, yet transfers to Google’s data centres located in any third country were not, absent model clauses or the like – despite Google Inc being subject to Safe Harbor. This approach, evidencing a narrow focus on physical data location, rather than jurisdiction, was perpetuated in the Article 29 Working Party’s subsequent cloud computing opinion (WP196).[19] WP196 stressed the importance of locations ‘in which’ data may be processed or services provided, including all data centres/servers (and any sub-providers’). It also stated that the current legal framework ‘may have limitations’ because controllers lack real-time knowledge of cloud locations. However, arguably, the limitations relate not to such lack of knowledge, but to DPAs’ requirement that controllers must have such knowledge. Because of DPAs’ interpretation of ‘transfer’, geographical location of physical infrastructure used to process personal data has become the be-all and end-all. The Restriction has become what I call a Frankenrule: it is taken on a life of its own. Separately from, and regardless of, compliance with the Principles, many DPAs insist on physical location of data within the EEA, or use of a permitted mechanism like model clauses or a derogation.

Data Location and Data Realities

Consequently, cloud providers are increasingly allowing users to choose data location, eg EEA data centres. Organisations like Oracle and Salesforce are building EEA data centres,[20] even when US data centres could be used; costs are likely to be passed on to users, reducing one of the benefits of cloud. But if physical data location is so important, what about metadata which may include personal data, eg usage information, or indexes created to enable searching? Where are metadata stored? Shouldn’t the Restriction be complied with regarding metadata too?[21] What about temporary copies of personal data, in caches and edge locations (eg content delivery networks) – must all those geographical locations be pinpointed and restricted too? Often, multiple geographical locations are involved in delivering one cloud or even web service.

The Restriction is problematic at least partly because mainframe and ‘paper file’ assumptions seem to underlie the DPD, as follows:

· a dataset only has one location (or primary location), and is only transferred ‘point to point’;

· possession of media holding personal data is both necessary and sufficient to access intelligible personal data;[22]

· the country where such media is located has jurisdiction over the media;

· therefore, protection of personal data must depend only or mainly on the country of data location;[23]

· therefore, to protect personal data, its physical location must be restricted.

But in the modern world, storage and processing of a dataset may be distributed across different equipment in one or more data centres. Data are often replicated to multiple locations within and across data centres. Thus, data movements are often multi-point and/or multi-stage. Possession of media is neither necessary nor sufficient to access intelligible personal data. Data are accessible remotely via Internet or private network without physical access to storage equipment; conversely, unauthorised physical access may not afford access to intelligible data because of distributed storage,[24] proprietary data formats used by some providers,[25] and, most importantly, possible use of strong encryption by users and/or providers.[26] Data may be physically moved to a third country without anyone in that country having access to intelligible data, if encrypted beforehand.

Nowadays, physically confining data to the EEA does not equate to or guarantee data protection. EEA-located data may be, have been, and are being accessed remotely – eg by criminal hackers in third countries or the EEA, or by providers for disclosure to third-country authorities when compelled by third-country laws.[27] Conversely, data may be physically located outside the EEA, yet be protected against unauthorised disclosure because of distributed storage, proprietary formats and, critically, encryption. Basing regulation on physical data location is arguably misconceived, for these and other reasons. In particular, in modern commercial and social environments, Internet ‘transfers’ occur, and data are easily replicated, continually, yet are often not framed, reported, monitored or enforced; physical data locations are multiple, may change, are technically difficult to verify or log, and increasingly irrelevant to users who rely only on remote logical access; further problems will arise if and when data centres are located in or over international waters,[28] in outer space or low orbit,[29] or are software-defined.[30] Vast amounts of time and resources are poured into compliance with the Restriction, which could be better spent on improving IT security; not least, I argue that Lindqvist itself emphasises jurisdiction over physical location.

Future Answers?

The Commission recognised that the Restriction (as currently interpreted) poses challenges for cloud computing, which it wants to encourage generally. It believes the proposed General Data Protection Regulation[31] would solve the issues. However, that draft Regulation would restrict ‘transfers’ even more narrowly.[32] Controllers wouldn’t be allowed to make their own assessments of adequacy, as in the UK, eg based on strong encryption. Indeed, no longer would technological safeguards such as encryption be given any credence; code’s role would be discarded – disregarded. Instead, law would become almighty. Only certain ‘legally binding instruments’ would be considered to provide adequate safeguards. Without such instruments, or an applicable derogation, transfers would be impossible without prior authorisation by already over-extended DPAs. Even a proposed derogation, for transfers necessary in the legitimate interests of the controller or processor, would not apply to the ‘frequent or massive’ transfers that are likely with cloud computing (the European Parliament’s LIBE committee would drop this derogation altogether). While some Member States would (helpfully) define ‘transfer’,[33] LIBE would limit transfers even further, restricting (or even abolishing) permitted transfer mechanisms in terms of time, scope and who can promulgate them, although promisingly allowing transfers based on a ‘European Data Protection Seal’ (independent certifications etc).[34]

Although current reform proposals aim to modernise laws, they could hold back cloud computing. Instead, let’s go back to basics. The Restriction’s legislative purpose was to ensure compliance with the Principles. The ‘what’ is compliance. The ‘how’ is control over logical access to personal data, particularly intelligible personal data, through IT security: confidentiality, integrity and availability. Such control is necessary, although not sufficient, for compliance. To use or disclose personal data, controllers need data availability: they need to secure (and prevent others from interfering with) their own access to intelligible data so they can retrieve data for their use or disclosure and to meet data subject access requests, correct or delete data as necessary, etc. Here, logical access is more important than physical access. Controllers should also prevent unauthorised persons from accessing intelligible personal data (through encryption, firewalls, access controls etc), to protect confidentiality; and from accessing even encrypted personal data, to protect integrity (although taking backups mitigates this risk). Backing up internally or to another cloud also protects data availability.

What is most important is not physical data location, but securing data confidentiality, integrity and availability to industry standards and best practices, as appropriate to the risks (eg sensitive data requires stronger encryption) – including taking backups. Physical location is one factor; it is relevant to security, but shouldn’t be considered an end in itself. Indeed, multiple physical locations may enhance security, eg backups in different countries to mitigate natural disasters’ impact on availability and integrity. To me, the file-sharing service The Pirate Bay (TPB) exemplifies the use of cloud to improve security! Responding to raids in multiple countries on data centres used by TPB, it moved to the cloud, with multiple providers in different countries (see diagram which may be donwloaded from the pdf file in the panel opposite), encrypting disk images and communications channels and backing up virtual machines.[35]

TPB’s system protects against risks of seizure of data or even a data centre in one country affecting TPB’s service availability, data integrity or end-user confidentiality.

Effective Jurisdiction

And so we return to the role of technology and effective jurisdiction. A country may claim jurisdiction on any basis it likes, such as data ‘located’ on its territory, but its claims may be ineffective unless it can enforce them. In practice, this requires some real connection with that country: incorporation, an office, staff and/or assets there. Owning or operating a data centre there may suffice – as SWIFT discovered. But merely using (without owning or operating) a data centre in a country remotely, eg only storing data there, without any other connection to that country, gives that country little or no practical hold over you if your data are strongly encrypted and backed up to another country. Encryption protects data confidentiality against data or data centre seizure;[36] backups similarly protect data availability and integrity. Thus, separation of ownership/use of resources, combined with encryption and backups elsewhere, may positively protect users against the country of data centre location having effective jurisdiction.[37]

Snowden’s revelations don’t alter my arguments that data protection laws should concentrate on who has effective jurisdiction over persons with access to intelligible personal data, not where data are physically located. Countries having effective jurisdiction over providers (eg based on their place of incorporation) can make them disclose intelligible personal data to which they have access, even if located outside that country. But user encryption can prevent providers, and therefore third countries, from accessing intelligible data. Encryption may little avail anyone directly targeted by nation-states, who have capabilities to access intelligible data (eg at endpoint computers before encryption or after decryption, or through backdoors or obtaining decryption keys)[38] – irrespective of cloud use or data location. However, it should mitigate other confidentiality risks and, as security expert Bruce Schneier observed,[39] widespread encryption should increase costs to governments of mass surveillance, thereby hopefully deterring it.

Conclusion

In summary, the Restriction is often considered a major barrier to cloud computing. However, physically restricting data location to the EEA does not ensure data protection. Controlling logical access to personal data, particularly intelligible data, is far more important. The focus should not be on where personal data are located, but on who can access personal data, how (and how to prevent such access), and, crucially, what they can do with the data and why: let’s not lose sight of the Principles.[40] To achieve a better balance between data protection and international commercial/social data flows, we don’t need to restrict physical data location: we need better, more technologically-neutral rules regarding security (including encryption and backups), transparency[41] and accountability,[42] that properly recognise the role that code, not just law, can play in protecting personal data.[43]

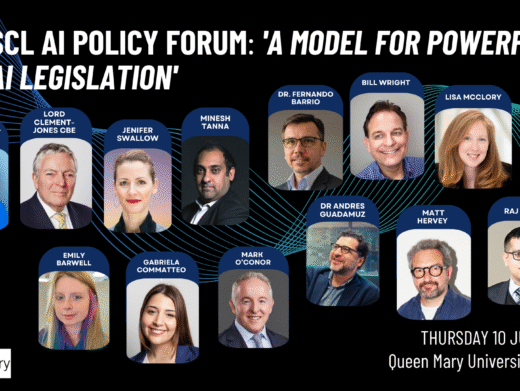

Kuan Hon is a joint law/computer science PhD candidate at the Centre for Commercial Law Studies, Queen Mary University of London. This article is written in her personal capacity. She is also working on two cloud law projects at CCLS and has recently started teaching the world’s first cloud computing law course there.

This article is subject to the copyright restrictions arising under a CC-BY-NC licence.

[1] http://www.scl.org/site.aspx?i=ed28054 3.4, Cloud Security Alliance table.

[2] 95/46/EC.

[3] Used to mean, DPD principles other than the Restriction.

[4] COM(92) 422 final – SYN 287, 4.

[5] COM(90) 314 final – SYN 287.

[6] Ibid 21-22.

[7] N 4.

[8] N 4, explanatory memorandum 14.

[9] Discussed further shortly (Lindqvist).

[10] Strictly, of course, digital data are copied – patterns of bits are replicated – rather than ‘transferred’ or moved.

[11] Case C-101/01 2003 I-12971.

[12] Ibid, [69].

[13] Who supervises data protection within EU institutions under Regulation EC 45/2001, similar but not identical to the DPD.

[14] http://www.edps.europa.eu/EDPSWEB/webdav/shared/Documents/Supervision/Adminmeasures/2007/07-02-13_Commission_personaldata_internet_EN.pdf

[15] Chapter 10, Millard (ed), Cloud Computing Law (OUP 2013). Whether a provider who uses non-EEA servers or sub-providers makes a ‘transfer’ is a different issue.

[17] ‘Data centre’ and ‘effective jurisdiction’ are deliberately used: revisited below.

[18] This Venn diagram illustrates ‘Europe’ and ‘EEA’ differences.

[20] Eg http://www.computerweekly.com/news/2240184051/Oracle-opens-datacentre-dedicated-to-G-Cloud and http://www.salesforce.com/uk/company/news-press/press-releases/2013/05/130501-2.jsp Partly motivated by some government users also requiring national data centres.

[21] In Narvik 11, another Google Apps decision, the Norwegian DPA considered that it should, if indexes include personal data.

[22] Misconceptions seemingly continue regarding distributed storage, eg the French CNIL’s cloud guidance fn 7 considers distributed physical storage complicates data subjects’ access rights, although with logical remote access physical data location is immaterial.

[23] Spain’s cloud guidance perpetuates this approach.

[24] Physical access to one server may provide access to only part of a dataset.

[25] Physical access does not guarantee access to full intelligible personal data, without knowledge of the data storage/representation format used.

[26] Physical or logical access to an encrypted dataset may not garner access to intelligible personal data, unless the intruder has the decryption key or can break the encryption (difficult or impossible if strongly encrypted).

[27] Eg http://www.zdnet.com/blog/igeneration/microsoft-admits-patriot-act-can-access-eu-based-cloud-data/11225 and http://www.zdnet.com/blog/igeneration/google-admits-patriot-act-requests-handed-over-european-data-to-u-s-authorities/12191

[28] http://patft.uspto.gov/netacgi/nph-Parser?Sect1=PTO2&Sect2=HITOFF&u=/netahtml/PTO/search-adv.htm&r=1&p=1&f=G&l=50&d=PTXT&S1=7,525,207.PN.&OS=pn/7,525,207&RS=PN/7,525,207

[29] http://thepiratebay.se/blog/210

[30] http://www.computerweekly.com/feature/Software-defined-datacentres-demystified

[32] Eg extending the Restriction (and other obligations) to processors, which seems unfair on those unaware that personal data are processed using their infrastructure (see Millard (n 15) Chapter 8, arguing that E-Commerce Directive (2000/31/EC) defences ought also to cover data protection issues).

[33] http://register.consilium.europa.eu/pdf/en/13/st17/st17831.en13.pdf

[34] http://www.europarl.europa.eu/sides/getDoc.do?type=REPORT&mode=XML&reference=A7-2013-0402&language=EN

LIBE’s proposed ‘anti-FISA’ provisions requiring DPA authorisation of transfers required under third country judgments etc may not protect EEA citizens against excessive mass government surveillance. That requires international agreements implementing appropriate restrictions, transparency and oversight of such surveillance, whether conducted by US or EEA governments, and agreements on how conflicts of laws (eg between the US and EEA) should be resolved.

[35] See http://torrentfreak.com/pirate-bay-moves-to-the-cloud-becomes-raid-proof-121017/ https://www.facebook.com/ThePirateBayWarMachine/posts/124238411060077 ad http://thepiratebay.sx/blog/224.

[36] Encryption is no panacea – it must be applied, and keys managed, properly. Also, many operations require decrypting data in-cloud first, temporarily exposing intelligible data (and risking confidentiality). But solutions may develop, eg homomorphic encryption (operating on encrypted data without need for decryption) and cloud encryption gateways (whereby only encrypted or tokenised data are processed in-cloud, but functionalities like searching may be preserved).

[37] Nevertheless, even technologically-literate countries like Singapore (which has no equivalent to the Restriction) claim jurisdiction over data ‘located’ on their territory, perhaps based on ‘if you can’t beat ’em, join ’em’, because in practice users apply encryption far less often than they should. But with Snowden’s revelations of massive data capture by US, UK and other intelligence authorities, awareness and hopefully use of encryption should increase.

[38] http://www.theguardian.com/world/2013/sep/05/nsa-gchq-encryption-codes-security

[39] http://www.computerworld.com/s/article/9243865/Security_expert_seeks_to_make_surveillance_costly_again

[40] There’s another critical risk: unauthorised/unlawful use or disclosure of intelligible personal data by someone authorised to access such data. Here, non-technological measures such as staff training may assume greater importance. Limiting such access only to those who strictly need it for their jobs may also be achieved by technology, but is driven by internal policies/procedures, which may in turn be driven by external factors – contractual or other legal obligations, certification requirements, reputational concerns etc.

[41] Including user education/awareness measures.

[42] Including independent security audits and certifications to industry standards, and meaningful, accessible remedies for data subjects.

[43] Including incentives such as liability exemptions or defences, and/or fewer obligations, where data are encrypted.