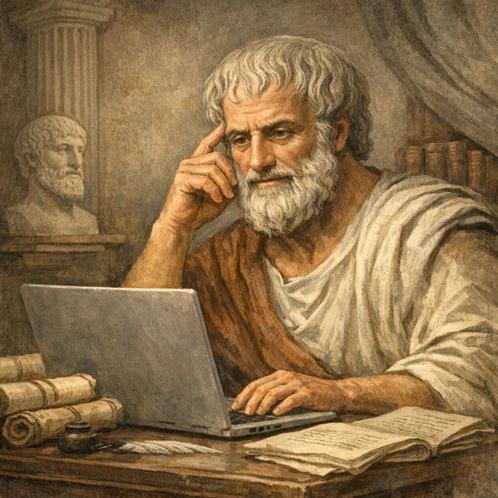

Gareth Davies is inspired by the Greek philosopher when engaging with AI.

There’s no shortage of advice on how to prompt AI: be clear, provide context, identify your audience, specify the format. Useful, but incomplete. For lawyers, prompting isn’t just a technical problem. It’s also a professional one. The danger isn’t silence; it’s receiving an answer that sounds right, feels authoritative, and covertly embeds bad judgment. Aristotle never met an AI. But his account of human excellence, virtue ethics, is a remarkably practical guide for lawyers using AI. Not because it tells you what to ask the machine but because it ‘prompts’ you to ask yourself: Who are you? What are you trying to achieve? And what does doing well actually look like?

Aristotle never met an AI – But he had a lot to say about judgment, purpose, and excellence; exactly what lawyers need most when using one.

I write from experience. I manage the commercial and legal function for a joint civil–military air traffic management program, with just one of our contracts exceeding a million words across thousands of pages, operating in an environment of managed, audited and ongoing change, multi-stakeholder governance, and complexity that doesn’t forgive sloppy thinking. I’ve also led work exploring AI-based decision support tools to assist human judgment in complex contract change environments.

The hardest part wasn’t the technology; it was articulating what good legal work looks like with enough precision that a tool can assist without displacing judgment.

That process taught me something Aristotle already knew.

Know Thyself: The Lawyer in the Mirror

The cornerstone of Aristotle’s ethics is that excellence is not an act, it is a habit. The virtuous person doesn’t consult a rulebook, they’ve cultivated good character through practice and reflection until good judgment becomes second nature.

For lawyers, this has an uncomfortable implication when it comes to using AI. You cannot delegate judgment you do not possess.

An AI will produce output that looks like advice. No matter how imprecise or ill-informed your instruction, its response will complement you on your question and reply with definitive authority. It doesn’t know the difference between a well-framed legal question and a poorly conceived one. You do, or at least, you should.

This is where prompting becomes a mirror. The quality of what you get back reflects the depth of your own professional understanding. Ask a junior lawyer to “summarise this contract” and they might produce a competent summary or a grand compendium. Ask a senior practitioner and they’ll know which clauses actually matter, which risks are hiding beneath the boilerplate, and which commercial outcomes the summary needs to serve. The AI doesn’t know the difference. Your prompt carries that judgment.

When I built a contract-change review agent for my program, the foundational principle I embedded was this: AI does not provide legal advice. Lawyers remain fully responsible for the advice given. If you cannot explain or defend an output, do not rely on it. That isn’t a disclaimer. It’s Aristotle in a user guide. The tool is only as good as the practitioner wielding it: your agent, your output, your responsibility.

The first Aristotelian lesson, then, is this: before you type a single word into that prompt box, ask yourself do I actually understand what I’m asking for? If the answer is no, the AI can’t save you. It can only burnish your uncertainty.

The Litigator’s Maxim for the Silicon Age: Never ask an AI a question you do not know the answer to.

In the courtroom, this prevents disaster. In the prompt box, it prevents “hallucinations” from becoming your professional nightmare. If you can’t verify the answer, you shouldn’t be asking the question.

Know Your Purpose: Telos and the Art of Asking

Aristotle was obsessed with causation, explanation and purpose. Everything, he argued, has an end, a telos, towards which it is naturally orientated. The telos of a pen is to write. And the telos of a lawyer?

The telos of a lawyer is not the production of legal documents, but the exercise of professional judgment, applied to advancing a client’s interests, within the law.

This distinction matters enormously for how we use AI. Most prompting advice focuses on the output: draft a clause, summarise a case, generate correspondence. But Aristotle would ask us to focus on the purpose: Why are you drafting this clause? What risk are you managing? Who is the audience? What outcome does this need to support?

A lawyer who understands their purpose writes a different prompt than one who is simply completing a task. “Draft a termination for convenience clause” is a task. “Draft a termination for convenience clause that protects the Commonwealth’s ability to exit a long-term IT services arrangement without triggering disproportionate exit costs, noting the contractor has significant capital investment and will likely claim for a host of associated but not necessarily linked works” is a purposeful instruction. The AI can work with purpose. It struggles with vague tasks, as do most humans.

In complex programs, this discipline becomes non-negotiable. Where most legal risk arises not from contract signing but from the continuous process of change – variations, engineering changes, evolving technical baselines: the lawyer’s purpose shifts from “drafting” to something more like a system of decision-making. An AI prompted to “review this variation” will give you something. An AI prompted to “assess whether this proposed change alters the risk allocation under the head contract, identify any scope creep beyond the contracted baseline, and flag governance triggers requiring delegate approval” will give you something far more useful. The difference is that the second prompt carries your professional understanding of what the work actually is.

The discipline of articulating your telos before engaging the AI is not just good prompting, it’s good lawyering. In practical terms it’s also likely to make the AI suggest better, more useful and contextual prompts which are more likely to be of use. If you can’t clearly state what you’re trying to achieve, the problem isn’t the technology.

Practical Wisdom: Phronesis in the Age of Algorithms

The lawyers who can use AI best are those who need it least.

Sit with that irony for a moment before you move on.

Phronesis, practical wisdom, is the ability to discern the right course of action in particular circumstances. It can’t be reduced to dry rules, checklists or black letter law. It’s developed through practice, reflection, and the kind of lived-in judgment that no checklist can replace. Aristotle considered it was the key virtue that binds all the others. You cannot know how to apply justice unless you know what justice requires in these specific circumstances.

This is precisely what AI lacks and the user needs to supply, it is unlikely to be patched in the next update.

AI gives you a plausible answer to almost any legal question, it will give you a well-structured and confident response, leaning far, too far sometimes, into what you want to hear. This plausibility is dangerous as the trust it engenders may not be supportable as it seems.

Phronesis is understanding when that answer is actually right, when a technically within-the-four-corners interpretation might be commercially absurd, when a precedent the AI has cited comes from a different area of law or has been overtaken by subsequent authority, when a clause is legally sound yet wrong for the relationship you’re managing as it doesn’t serve the parties’ objectives.

In practice, I’ve found this plays out in a very specific way. When I use an AI agent to review contract-change documentation, I treat it like a capable junior: fast, structured, and thorough but requiring supervision. You still need to check citations, confirm the contractual baseline, validate stated assumptions, and rewrite anything you cannot explain or support. The final work product must be yours, not the agent’s. That verification discipline is phronesis in action. It is practitioner judgement doing what no tool can do.

And there are moments where phronesis demands you stop. When a proposed change materially shifts liability/risk. When scope creep lacks a clear contractual mechanism. When approval pathways are uncertain. These are not problems prompts can resolve. They require escalation, professional judgment, and sometimes the courage to say “this needs more work” when the Program is pressing for speed. No amount of prompt engineering replaces the wisdom to know when you’ve hit the limits of what a tool can do.

Which brings us back to where we started. Junior lawyers stand to benefit the most from AI as a force multiplier. They are also the most at risk, lacking the experience and judgement to understand what a quality output is. This isn’t an argument against junior lawyers using AI. It’s an argument for investing in their professional development, hand in hand with their technical development. One without the other is how errors compound quietly.

The irony doesn’t resolve. It is a risk that needs to be managed; like most wicked risks, it cannot be resolved but it can be managed and minimised.

Phronesis, in this context, is the capacity to evaluate AI output not just for accuracy, but for appropriateness. And that capacity isn’t developed by using AI more. It is developed by practising law deeply, reading widely, having made mistakes and learning from them.

The Advice-Structured Prompt

Don’t “chat” with AI—structure your instruction like a formal advice:

- Issue: Define the specific question clearly.

- Background: Provide the commercial context and project history.

- The Rule: Specify the exact clauses or legislation to apply.

- Application: Map the facts to the rules.

The Takeaway: If you structure your prompt like a memo to a junior, you provide the AI with the logical scaffolding it needs to emulate your judgment.

The Golden Mean: Finding Balance

Aristotle’s doctrine of the golden mean is that virtue lies between two extremes. Courage sits between cowardice and recklessness. Generosity between meanness and extravagance.

For lawyers and AI, the golden mean lies between two familiar poles: uncritical deference and reflexive dismissal.

There’s the practitioner who accepts AI output uncritically, treating it as the perfect research assistant. We’ve already seen the consequences: fabricated case citations submitted to courts, hallucinated statutory provisions embedded in advice. This isn’t AI failure. It’s a failure of professional judgement and responsibility.

Then there’s the practitioner who refuses to engage with AI at all, who dismisses it as a fad, or who insists that “robots cannot replace lawyers.” This latter position may feel principled, but as time goes by it is becoming increasingly untenable. The profession is moving. Clients are moving. The question is not whether AI will be part of legal practice, but whether you’ll have the practical wisdom to use it well.

The Aristotelian lawyer looks to find the mean. They use AI as a tool of augmentation, something that extends their capabilities rather than replacing their judgment. They trust, then verify and question. They bring their professional judgment to every interaction with the technology, just as they would to every interaction with a client, a colleague, or a court.

In my own experience, the mean also applies to what you build. A well-designed legal AI tool should have clear boundaries: defined review modes for different purposes, mandatory escalation points where human judgment is non-negotiable, and a reporting mechanism for when things go wrong. Design decisions made today must survive tomorrow’s audit. That discipline, building AI tools that are themselves governed, is the golden mean applied to the infrastructure of practice, not just its individual moments.

Excellence as Practice

For Aristotle, excellence (areté) was never something you arrive at. It is something you do, repeatedly, until it becomes who you are. You don’t become virtuous by reading about virtue. You become virtuous by acting virtuously, rinse and repeat, until it becomes part of who you are.

The same is true for lawyers engaging with AI. You don’t become an excellent AI-augmented lawyer by reading endlessly about prompting (though that can be helpful and is a trap for the obsessive). You become one by bringing your full professional persona: your knowledge, your judgment, your sense of purpose to every part of your legal practice.

In the programs and environments where I work, law is part of the safety system because governance, evidence, and accountability are part of safe outcomes. Every communication may be evidentiary. Every decision about authority and delegation carries consequences that compound over decades. AI doesn’t change that reality. It intensifies the need for the practitioner to understand it.

The prompt box is not a search box. It’s a place where your professional identity meets a powerful new collaborator. What you put into it says as much about your abilities as a lawyer as what comes out.

So before you type your next prompt, ask yourself the question Aristotle would have asked, not of the machine but of you:

Do I know what excellence looks like? And if the answer came back wrong, would I know?

Gareth Davies is the OneSKY Commercial & Legal Manager at Airservices Australia, where he manages commercial and legal strategy for the joint Airservices–Defence air traffic management OneSKY Program. He holds a Master of Laws from the Australian National University, is admitted to the Supreme Court of NSW and practices in the ACT. The views expressed are his own and do not necessarily represent the views of Airservices Australia.